The Data Gap Opportunity: How Invisible Women Reveals Mispriced Markets

The investing lessons from the book Invisible Women

Markets are supposed to be efficient, but efficiency depends on the quality of the data market participants use to make decisions.

Invisible Women by Caroline Criado Perez is a public policy and social advocacy book, but its underlying thread is the systematic failure to collect and analyze data on half the population: women.

The result is that products, policies, infrastructure, and algorithms built on this incomplete data are systematically wrong. Products do not fit women’s bodies. Medical treatments are tested only on men. AI systems are trained on male-default datasets. Urban infrastructure is designed around male commuting patterns.

Every one of these issues affects investing because “systematically wrong” often means “systematically mispriced.” The gender data gap is a structural market inefficiency, and inefficiencies create opportunities.

I am going to try to translate the book’s central thesis into a framework that public market investors can use to identify mispriced opportunities, hidden risks, and underappreciated secular trends.

The $12 Trillion Misallocation: Unpaid Labor as Invisible GDP

McKinsey estimated in 2015 that closing the gender gap in labor force participation could add $12 trillion to global GDP.

Between 1970 and 2009, the addition of 38 million women to the US labor force made GDP 25% larger than it otherwise would have been. Every percentage-point increase in women’s employment yields a disproportionate increase in GDP.

Any country that wants to increase incremental GDP growth should tap into this economic reservoir, but the major hurdle is unpaid care work.

Globally, women do 75% of unpaid care work, spending between three and six hours per day on it compared to men’s thirty minutes to two hours. This unpaid labor subsidizes the entire economy, yet it is invisible in traditional economic measurement. When governments cut public services, demand does not disappear. The work simply shifts onto women, reducing their participation in paid labor and weighing on GDP.

The companies and countries that enable women’s participation in the paid workforce through childcare infrastructure, flexible work, and parental leave are not going “woke,” and they are not shifting the burden of childcare costs from people who want kids to people who do not want kids, they’re unlocking another reservoir of economic growth.

Studies cited in the book showed that a decrease in the time British women spend doing unpaid work from five to three hours correlated with a 10% increase in their paid labor-force participation and higher participation rates corresponds to higher GDP growth that benefits all.

The Care Economy as Infrastructure Investment

The book makes the case that the care economy — childcare, elder care, domestic services — is genuine economic infrastructure.

Early childhood education was found to have a greater positive impact on long-term economic growth than business subsidies, adding an estimated 3.5% to GDP by 2080. Adults who received ECE were more likely to be employed (76% vs. 62%), to own homes, and to have savings accounts. In New York, before the introduction of universal preschool, more than a third of families waitlisted for childcare assistance lost their jobs or were unable to work.

Investing 2% of GDP in the caring industries would create nearly 13 million new jobs in the US, compared to 7.5 million from the same investment in construction — the traditional “infrastructure” play. The UK’s Women’s Budget Group found that investing 2% of GDP in public care services would create almost as many jobs for men as investing in construction, but up to four times as many jobs for women.

Investors typically associate “infrastructure” with roads, bridges, and broadband. But sometimes you need to do the cost-benefit analysis with better data. Maybe it’s cheaper in the long run to invest in social infrastructure if it reduces lost labor costs, improves workforce participation, and drives GDP growth.

The Venture Capital Blind Spot

93% of venture capitalists are men. And men back men.

The Boston Consulting Group’s (BCG) research found that female-owned startups generate 78 cents of revenue per dollar of funding, compared to 31 cents for male-owned startups. They also generate 10% more cumulative revenue over five years.

This is one of the starkest examples of a market inefficiency driven by a data gap. The entire venture capital and growth equity pipeline is systematically mispricing female-led companies because the capital allocators evaluating them are pattern-matching against a default founder profile that looks like themselves.

For public market investors, this matters because the mispricing doesn’t stop at Series A. Companies that were underfunded in their early stages carry that disadvantage into the public markets. And the same pattern-matching bias that causes VCs to underweight female founders likely causes public market analysts to underweight female leadership more broadly.

Diverse Leadership as Mispriced Alpha

S&P Global found that within 24 months of taking office, female CEOs saw a 20% increase in stock price compared to their male counterparts. A portfolio simulation tracking companies led by women CEOs from 2002 to 2014 yielded a 348% return. The S&P 500 returned 122% over the same period. S&P 500 firms currently led by women CEOs have, on average, a 53% higher market cap and 68% higher net income than their male-led counterparts.

The CFO data is equally compelling. OneStream’s 2025 “Glass Chair” study, which analyzed over 1,100 finance leaders, found that underperforming companies that appointed a woman as CFO saw total shareholder return improve by an average of 10% relative to industry benchmarks. Publicly listed companies with women CFOs in the US, UK, and Europe delivered an average annualized shareholder return of 4.5% during their tenures, outperforming industry benchmarks by approximately 0.2% per year. That may sound small, but compounded over a decade it’s material.

Why the outperformance?

Research consistently shows that companies with female CFOs exhibit more conservative accounting, fewer financial restatements, lower levels of corporate overinvestment, and reduced stock-price crash risk. They tend to manage earnings more conservatively and maintain stronger internal controls.

The outperformance is especially pronounced during periods of stress. During financial crises — including the 2008 financial crisis and COVID-19 — firms with female leadership tended to achieve better returns, higher stability, and lower risk.

On the portfolio management side, Fidelity research found that women tend to trade less and hold firm during volatility, leading to higher risk-adjusted returns.

Another BCG study found that companies with above-average management diversity reported innovation revenue 19 percentage points higher than companies with below-average diversity. Diverse companies generated 45% of their total revenue from innovation, compared to just 26% for less diverse companies. McKinsey’s research, updated through 2023, found that companies in the top quartile for gender diversity on executive teams are now 39% more likely to outperform on profitability — up from 25% in their earlier studies.

Diverse teams are better informed about their actual customers. For pretty much every product out there, half of your customers are women. And when half your end-users are women, having women in leadership isn’t a diversity initiative. It’s a necessity. It’s the difference between designing products that actually fit your market and designing products that fit the assumptions of a homogeneous leadership team.

And yet, women still hold only 9% of CEO roles in the S&P 500. If the market were efficient at pricing leadership quality, this gap between performance data and representation would have closed long ago. The fact that it hasn’t suggests that the same male-default thinking Criado Perez documents in product design, urban planning, and medical research is also operating in how investors evaluate management teams. Female leadership remains structurally undervalued.

Product Markets Designed for Half the Population

The book is filled with examples of products that fail women because they were designed by and for men. Smartphones too large for women’s hands. Voice-recognition software 70% more accurate for male speech. Car crash-test dummies based exclusively on male bodies, which makes women 47% more likely to be seriously injured and 17% more likely to die in a car crash.

Every one of these design failures represents an addressable market.

Consider the breast pump market, estimated at $700 million with room to grow, dominated by a single company (Medela) selling products that users widely find inadequate. More women buy iPhones than men — so why are iPhones still designed more for men’s hands? The women’s health tech (femtech) space covers pelvic-floor health, menstruation tracking, and fertility — conditions affecting 50% of the population — yet the sector remains chronically underfunded.

Companies that close these product-design gaps don’t just capture underserved demand. They build switching costs from genuine consumer loyalty.

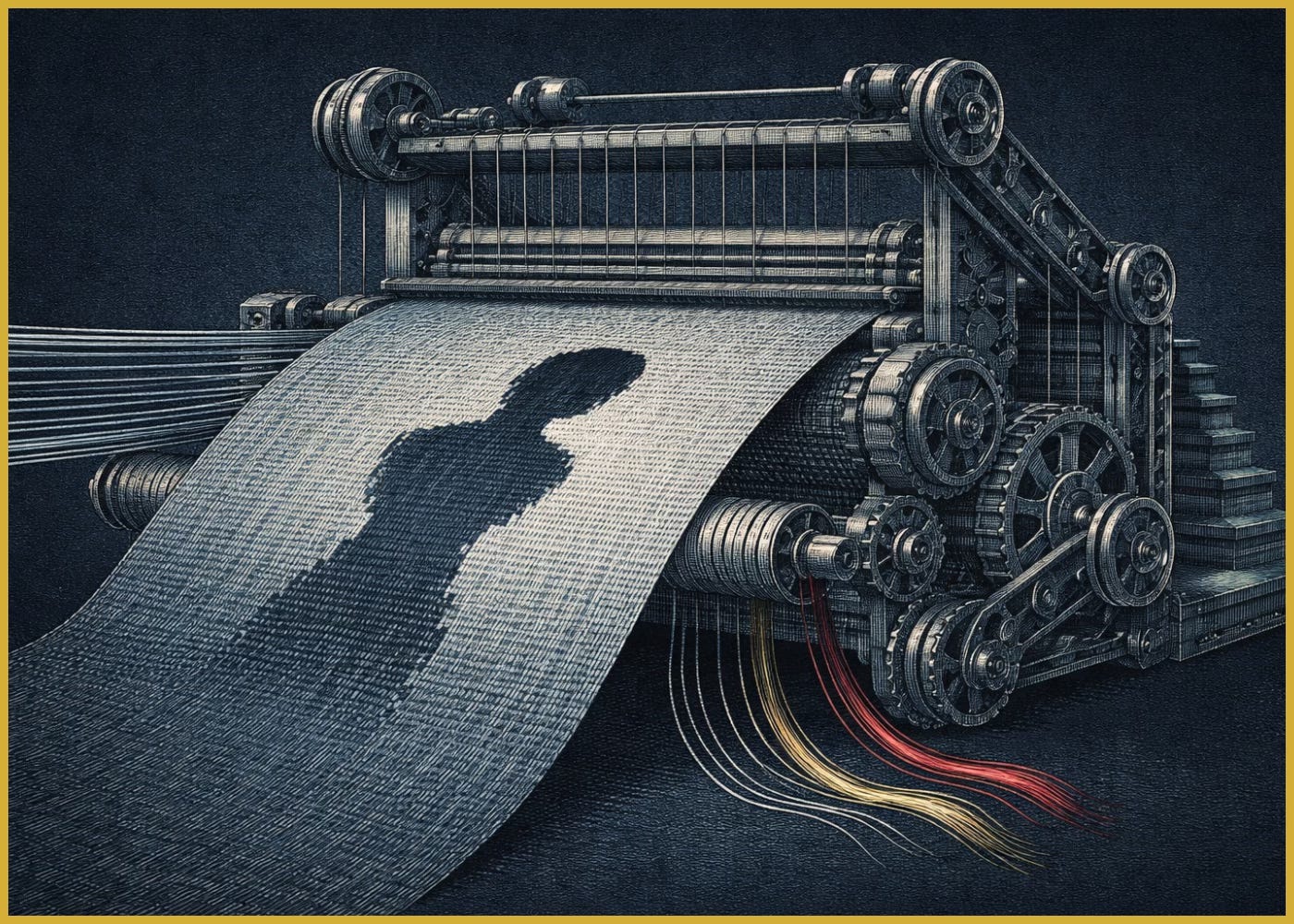

AI and Algorithms: Compounding The Gender Data Gap

The most important part of the book for investors and market participants going forward is the section on technology, algorithms, and AI. It comes down to a simple question: who controls and codes the algorithm, and what data do they use?

The book’s argument is that AI systems trained on historically biased data don’t just replicate the gender data gap, they amplify it at scale.

The compounding works like this: historical data is male-default because medical trials, employment records, speech corpora, and image datasets were all built with male subjects as the norm. Algorithms trained on this data encode the bias as objective truth.

The outputs then shape future data collection, creating a self-reinforcing feedback loop. Each cycle widens the gap.

The most glaring examples exposed in recent history involved hiring algorithms at Gild and Amazon.

Gild built hiring algorithms that inadvertently disadvantaged women by treating male behavioral patterns, like contributing to open-source code on GitHub at nights and on weekends, as proxies for talent. Women with unpaid care responsibilities are less able to code at midnight on a Saturday. The algorithm did not account for this because it was built predominantly by men who did not have experience living and working as a woman. The intent was not to be sexist, but training on biased data was enough to reinforce the bias.

Amazon’s now-infamous AI hiring tool ran into the same problem and had to be scrapped. Built to score candidates by learning from a decade of past resumes, it effectively learned that “male” signifiers were a proxy for “qualified” and began downgrading women’s applications for technical roles. Engineers tried to patch the model by stripping out explicitly gendered terms, but the bias kept resurfacing in subtler patterns, and Amazon ultimately abandoned the project rather than ship a system that automated discrimination.

In general, 72% of US CVs never reach human eyes. Interview algorithms are being trained on the posture, facial expressions, and vocal tone of “top-performing employees.” Were those top performers gender and ethnically diverse? Does the algorithm account for socialized gender differences in tone and facial expression? We don’t know, because the companies developing these products don’t share their algorithms.

And not knowing what data they are being trained on or what data gaps exist can be dire especially when making medical decisions

The book documents a medical knowledge base heavily skewed toward the male body. With AI diagnostics being built on that foundation, there’s a real risk that these systems make diagnoses for women worse, not better. The second most common adverse drug reaction in women is that the drug simply doesn’t work. Less than half of publicly available prescription drugs have been analyzed for sex differences. Building AI on top of this data without correcting for it is scaling a known deficiency.

I see the following investment implications.

Regulatory risk. As governments scrutinize algorithmic bias — the EU AI Act being the most prominent example — companies deploying biased AI face significant legal and reputational liability. Investors will need to evaluate AI-dependent companies not just on model performance but on training data composition, bias auditing practices, and transparency. The companies that can’t answer these questions are carrying an undisclosed risk.

Companies that solve for this bias can capture enormous value. Whoever builds medical AI that works for women, recruiting algorithms that select for talent rather than male behavioral defaults, or other AI-driven innovation trained on data competitors are missing will have a structural advantage. Today’s AI ecosystem is potentially leaving half the market underserved, which creates a large addressable market.

There is also a dangerous information feedback loop for investors. If the data and analytical tools investors use are built on the same male-default assumptions, then investment analysis itself inherits the gender data gap. Quantitative strategies that rely on NLP, sentiment analysis, or alternative data should be scrutinized for the same biases. If today’s investment and trading algorithms are making decisions on incomplete data, then the firm that incorporates the gender data gap into its decisions can gain a meaningful competitive advantage.

Closing the Data Gap Is an Alpha-Generating Strategy

Again, the book uses data, or more importantly, the lack of sex-segregated data, as a social advocacy tool to push for better policies to improve the lives of half the world’s population and to make economies and governments work better for everyone.

But from an investor viewpoint, I see a massive, structural market inefficiency.

The inefficiency persists for the same reason most market inefficiencies persist: the people making the decisions do not see it. Male-default thinking is so deeply embedded that it reads as neutral. Products designed for men feel like products designed for everyone. Data collected on men feels like data collected on humans. Algorithms trained on male behavior feel like algorithms trained on merit.

But neutrality requires evidence. And the evidence, once you look for it, overwhelmingly shows that the default is not neutral at all.

Companies and investors who recognize this can find mispriced opportunities, avoid hidden risks, and position themselves ahead of regulatory and demographic shifts.

Invisible Women: Data Bias in a World Designed for Men